文章认为,“AGI即将到来”的末日论者本想警示风险,却在夸大与炒作中推动了公众和行业对AI能力的误判,结果可能适得其反地伤害了人类整体利益。作者强调,当前局面多由善意出发,但也夹杂了对技术宣传缺乏批判性的接受。

Last summer I wrote a draft of a long essay that I wished I had posted, which had a working title like “doomers’ own goal,” the point being that doomers, especially those who have screamed that AGI is nigh, have wanted to slow AI acceleration (at least until we could make AI safe) and instead have only accelerated it.

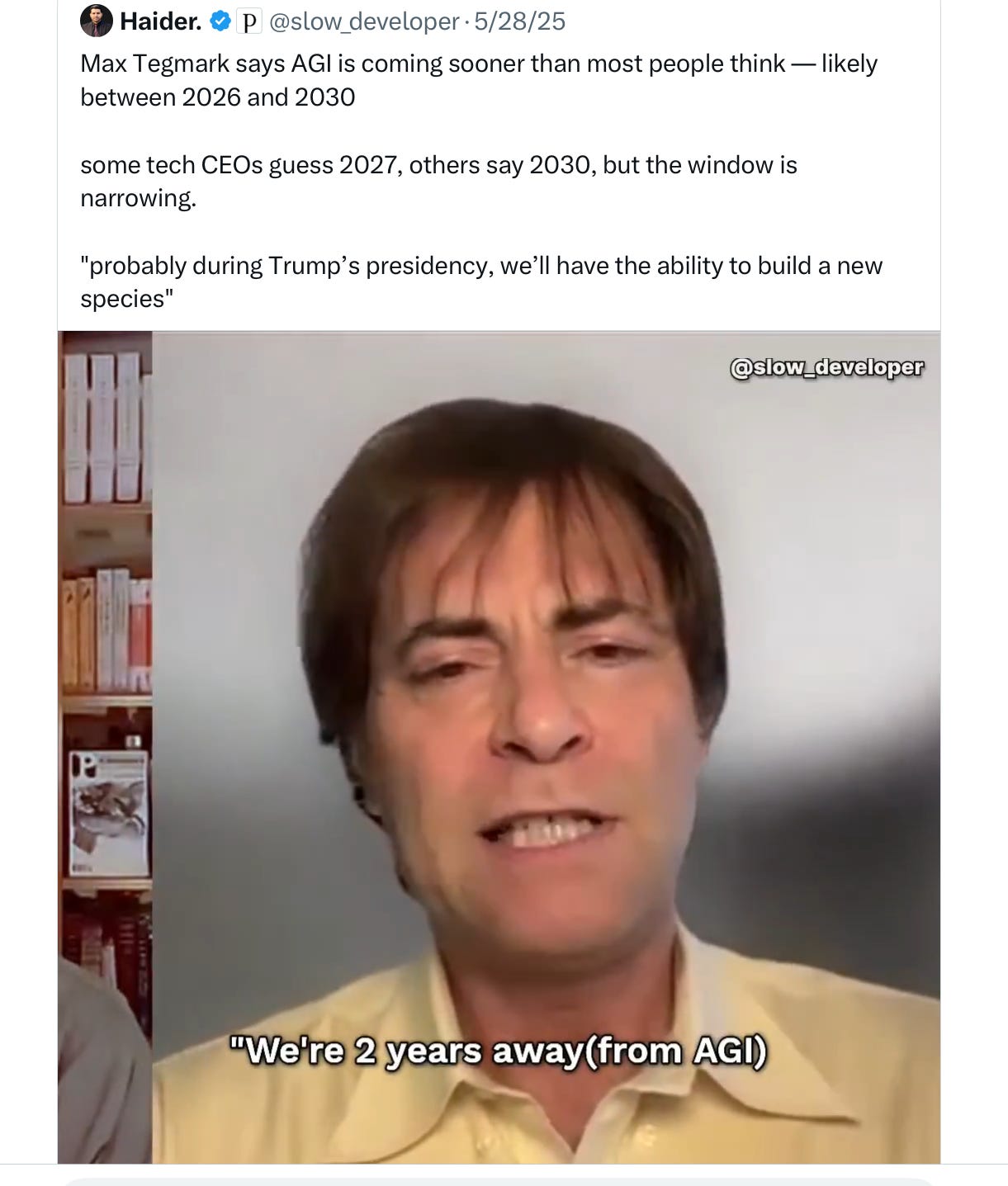

The unpublished essay pivoted around an absurd tweet from Max Tegmark, perhaps not representative of all doomers but certainly typical of what I have heard from many:

My view, once but no longer a minority view, has always been that these projections were widely off the mark1

But they have also been influential, and not in a good way.

§

Today, the always insightful Casey Mock (who writes tomorrow's mess) has a new essay on “the consequences of three years of doomer propaganda” that reminds me, with a lot of chagrin, that I really should have spoken up sooner.

As Mock puts it,

the prognostications of the doomer community have been, nearly without exception, wrong — not in small ways, but in the foundational sense that the imagined trajectory keeps failing to materialize. That is what happens when your mode of analysis is closer to erotic Harry Potter fan fiction (which is indeed the medium in which Yudkowsky has delivered some of his prognostications) than to actual research and policy: by treating the political and cultural environment as stable and predictable, and treating fallible human actors as game pieces that will respond sensibly to carefully constructed arguments. But the world is messy. Fan fiction tends to leave out the messy parts…

they cannot model the messy Pete Hegseths of the world, even as their claims whet Hegseth’s appetite. The rationalist view of the world assumes, at some level, that the relevant actors are optimizing for well-understood, predictable variables and a clear understanding of what best serves their self-interest. What it cannot account for is bad faith, impulsiveness, ideological motivation untethered from evidence, random instances of force majeure, and personal whims and petty rivalries. And so while the doomer community spent years warning about uncontrollable AI systems that do things their creators didn’t intend, they apparently did not consider what would happen when the humans currently running the United States government got access to technology they’d been told was the hinge of history.

What happened, of course, is that when Amodei resisted using his AI for the nefarious purposes of mass surveillance and autonomous drones without humans in the loop, for which the requisite reliability is current lacking, Hegseth told him to f* off, and got the more pliant Sam Altman to sign a weak, loophole-laden contract.

History will probably regard that as a turning point.2

§

Why was Hegseth so aggressive? Perhaps in part because of the hype, which the doomers, nominally independent from the hyperscalers, and with lots of pull in Washington, have often exacerbated. By proclaiming that LLMs would change the world, and that they would do so imminently, doomers contributed to the growth of the LLM companies (essentially free publicity) and to the White House thinking LLMs were critical to the survival of the nation.

Where has all this gotten us?

It has made dodgy companies that gave lip service to AI safety tremendously wealthy —on the promise, not yet delivered—that they could create AGI.

And made governments so covetous of this stuff that was allegedly like AGI that they have taken it by force.

And led governments to start using today’s manifestly unreliable tech in the context of war.

Which (precisely because it is unreliable) may lead to the accidental acceleration of war.

And in so doing perhaps lead to thousands or millions of unnecessary deaths.

Meanwhile, the race with China narrative has gotten overplayed (both countries will have their own LLMs, no matter what) and the immediate problems of LLMs from cybercrime to misinformation to nonconsensual deepfake porn tend to be underplayed, because unrealistic melodrama sucks away attention. And we may wind up with a boy-who-cried wolf situation when true AGI does arrive.

§

A great tweet came out while I was drafting this:

If the loudest doomers, many of whom are wealthy and/or influential, had not oversold generative AI, we might not be here.

Or we might have had more time to prepare.

The single biggest consequence of the doomer campaign has been to make the companies that make generative AI far more powerful than they deserve to be, largely on dubious promises that they have yet to fulfill. Many more calm predictions have been drowned out by doomsday noise.

Small wonder, as Mock argues, that government eventually started to believe – and to try to seize the tech.

Now we all may wind up paying the consequences. I am fully with the doomers in wanting to prepare for the future, but that needs to start with a realistic assessment of the present.

Gary Marcus is not a “doomer” but he does think society should much work harder on AI safety. Even if we have 10-20 years, it’s not a lot.

One long passage in the summer 2025 essays read as follows: “To my mind, these timelines of two to three years don’t even pass the sniff test.

• LLMs, the closest thing we have to a general form of artificial intelligence, frequently fail at all kinds of basic instructions, like telling time, counting letters, comparing decimals, and following the rules of chess, and so on,

• LLMs continue to struggle heavily with reality. o3 hallucinates more than its predecessors.

• LLMs continue to struggle with reasoning and planning, as the Apple paper and Rao Kambhampati’s work etc have shown.

• Causal reasoning, often emphasized by Judea Pearl, remains a huge weakness, as see in problems with river crossing problems (a man, a woman, and a boat cross a river), etc.

• LLMs also continue to struggle with unfamiliar circumstances as work like the above and countless examples in social media have shown. And for principled reasons that date back to my own 1998 analysis of multilayer perceptions.

• LLMs continue to lack stable models of the world. (This is why, for example, they can’t reliably stick to legal moves in chess, and is what my next long essay will be about.) They still can’t be fully trusted even to know how many limbs someone has (see below).

• We still don’t have principled solutions to any of these concerns. It’s also just pour in more data and hope for the best. None of the qualitative concerns I keep raising have been solved. Expecting that all of them will be solved in 24-36 months beggars belief.

• Although LLMs are impressive in many ways, they still lack any serious ability to evaluate the likelihood of their own beliefs. They constantly apologize, whether right or wrong, and rarely properly update their beliefs in a lasting way.

• Realistically AI can be used by scientists, but that doesn’t make them scientists. The latest move is to act like AGI will be achieved if it can reason as well Joe Sixpack, drunk on a Saturday or distracted by his cellphone, but the whole conversation about AGI risk only becomes relevant if they can outcompete human scientists and technologists. I see no evidence that this true anywhere except in menial work, like autocomplete for coding.

• I am hard-pressed to find any domain in which LLMs truly act autonomously without human intervention. (Compared with calculators, route planners, chess computers, which are much narrower intelligences but vastly more reliable and autonomous within their domains of application.)

• In many domains, such as chess, logistics, poker, navigation, etc, LLMs are no match for existing domain-specific AI solutions. They shine in open-ended problems where accuracy is not a necessity, and in domains like apprentice-level coding where huge-verifiable data augmention is possible, but struggle in many many other domains. For $55, you can buy an Atari 2600 that can beat a billion-dollar LLM in chess.

• The “last mile” in AI is always hard. Waymos were able to drive around Menlo Park a decade ago, yet still have yet to face a Buffalo winter. Approximating human behavior with large amounts of data is easy, but getting things to really work right is hard.

Looking at how much of a struggle truly autonomous driving is (ditto for truly autonomous humanoid robots, which still don’t exist, at any price), and it’s laughable to me that people think that all of the above problems will be fully solved - and put in production – the next three years.”

Mock’s take on how doomers are naive about human psychology fits extremely well with the review I wrote of doomer-in -chief Eliezer Yudkowsky’s recent book at TLS.

No posts