文章讨论五角大楼将Anthropic列为供应链风险的理由,分析其论点是否意味着美军对Claude存在担忧,并梳理相关争议。

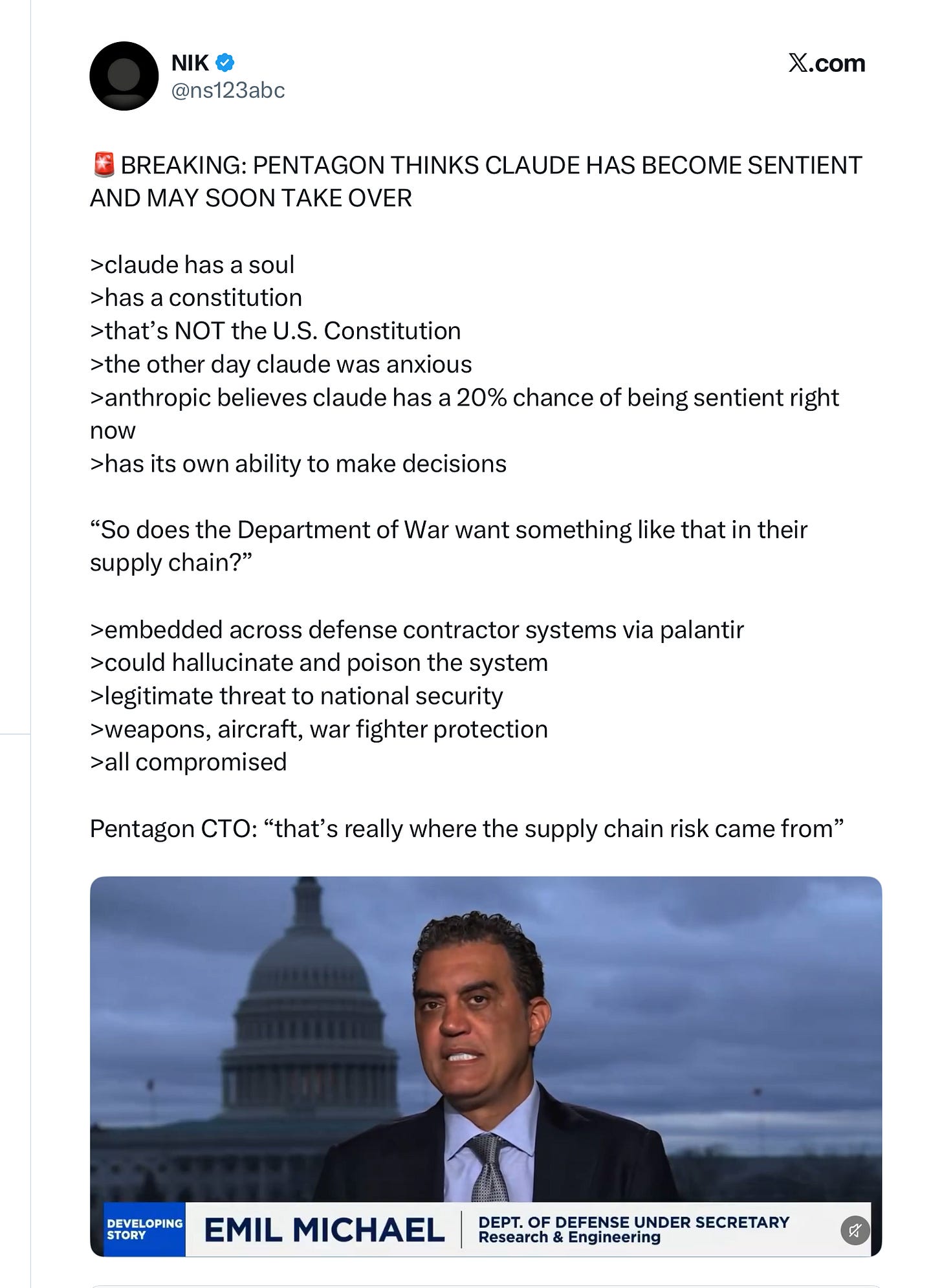

Many of you may think that the Defense Department branded Anthropic a supply chain risk because they (Anthropic) refused to play ball on surveillance and fully autonomous weapons, or because OpenAI President Greg Brockman gave Trump $25 million dollers (matched by an equal contribution from Brockman’s wife), but Under Secretary of Defense/Defense Department CTO Emil Michael just gave an entirely different theory on CNBC. They are worried, or so he said, that Anthropic might “pollute” the supply chain.

A convenient summary of Michael’s argument is below; the relevant bit comes in the final minute of the Michael’s full interview, which you can find here:

Let’s break this down into parts, that I will call “marginal assumptions” and “wild leap of logic”.

Claude has a soul. Why on earth would anyone say that, and under what definition? And what would qualify the Defense Department to judge such a question?

Claude has a “constitution” that “isn’t the US constitution”: true, but see the discussion of logic below.

The other day Claude was anxious. No, the other day Claude said it was anxious. It is a misapprehension of LLMs to confuse the things they say with what is true. LLMs have also, for example, been known to claim they have children (they don’t), or that they like to hang out with their friends on the weekend (also not true). They mimic things humans say, but we shouldn’t take them as veridical descriptions of anything happening on the inside.

“Anthropic believes that Claude has a 20% chance of being sentient right now”. No, Anthropic didn’t say this; Claude Opus 4.6 apparently did (“assign[ing] itself a 15 to 20 percent probability of being conscious under a variety of prompting conditions.”). Amodei himself refused to take those numbers seriously, when asked about them in interview.1 And, again, see point #3 above; Claude is next-word predictor, not an authority on its own internal states. It’s silly to take its estimates seriously.

From all this, some pretty dubious, and the fact that LLMs hallucinate (patently true) the Under Secretary argues that Claude is a supply chain risk that could “pollute” and poison the defense department.

Here Michael seems half-right, but entirely confused. I agree that putting hallucinating LLMs into war machines can be dangerous. But in no way is this specific to Anthropic.

Take sentience, for example. Anyone remember Blake Lemoine and his claims that Google’s LAMDA was sentient? If one takes a naive view of AI (believing that what it says reflects its actual own internal states directly), one can argue that any LLM is sentient. They have all been trained on consciousness talk, so to someone who doesn’t understand how they work they could all look sentient. But there is no more reason to think that Claude is sentient than to think that Google’s now long-obsolete system LAMDA was. If you actually believed that Claude was sentient and that sentience was de facto a supply chain risk, you would have to think that essentially every LLM is a supply chain risk. In which case the right choice is to stop using LLMs altogether, not to selectively punish Anthropic.

Essentially the same argument applies to Claude’s constitution, which amounts to a set of built-in guardrails. OpenAI has those, too! Just constructed differently. Everyone in the industry realizes that LLMs without guardrails are an uncontrollable menace. If you are worried about systems having guardrails, you shouldn’t be using LLMs, because you certainly shouldn’t be using them without guardrails. And there is no argument Anthropic’s system of guardrails is any more dangerous than anyone else’s. (It just happens to have a more evocative name.)

And the same argument I am making here also certainly applies to hallucinations. If you think it is bad to use hallucinating LLMs to autonomously choose targets (I certainly do), you shoudn’t use any of them. Again this is in no way whatsoever special to Anthropic. Either all the LLMs are supply chain risks, or none of them are.

§

Under Secretary Michael made some other points earlier in the CNBC interview that seem more reasonable; I understand why DoD might not want to work with a particular contractor. But the selective supply chain risk arguments just don’t hold water.

As mentioned here recently what Anthropic’s CEO actually said about this on Russ Douthat’s podcast is still dubious, a retreat to “there are things I don’t know, so maybe it’s true”, but much more careful: “We don’t know if the models are conscious … We are not even sure that we know what it would mean for a model to be conscious or whether a model can be conscious”. Which is to say we shouldn’t be putting our probabilities before our definitions, or even putting specific probabilities on things we can’t define.

No posts