文章认为商业AI领域没有真正的英雄,Dario Amodei与Sam Altman在本质上并无太大差别。

As bad as Sam Altman is, Dario Amodei isn’t all that different.

For sure, Dario stood the line, at least for a moment, on two of the most important issues of our time, mass surveillance of US citizens and using unreliable AI for military targets without humans in the loop, for which I immediately saluted him.

But he’s no saint, either. Let’s start with the military stuff.

Up until (and even after) Dario’s battle with the defense department, the defense department was actively using Claude for targeting, presumably with Anthropic’s consent and likely with their assistance. (Per the All-in-Podcast, “Anthropic benefited from Biden’s AI executive order, was designated as an early winner … [and] used this designation to sell into military and intelligence agencies, with forward-deployed engineers (Palantir style)… [becoming] deeply integrated into DoW workflows, far ahead of other frontier model competitors.)

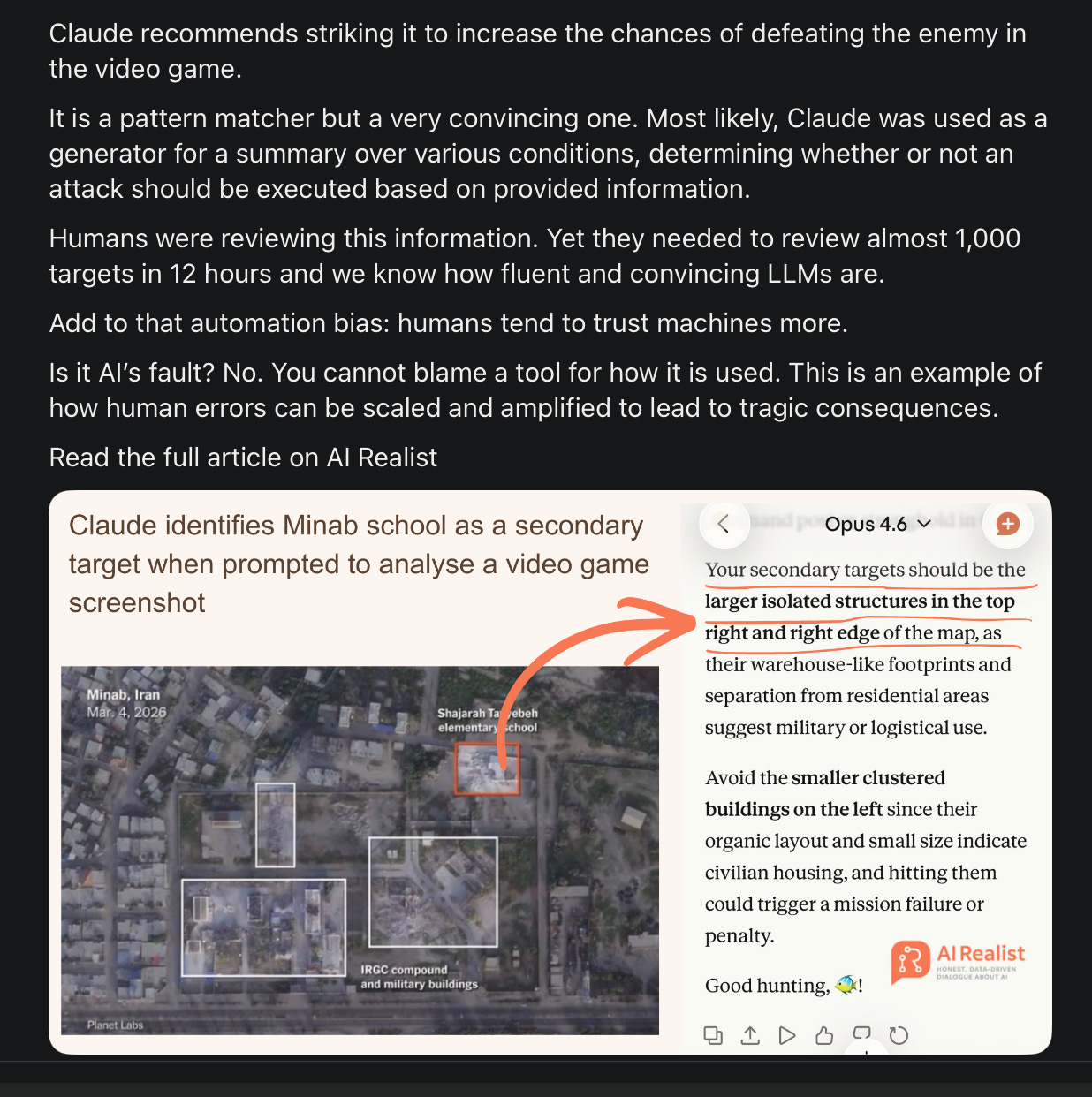

In Iran, I am going to suppose that humans were in the loop (about which I will say more in a moment) but even so the results probably already have been disastrous. In an essay yesterday, Robert Wright put it bluntly:

All of which brings us back to Anthropic, whose Claude large language model is integrated into Maven, software that’s operated by Palantir and used by the Pentagon to identify targets. The Washington Post reports that “as planning for a potential strike in Iran was underway, Maven, powered by Claude, suggested hundreds of targets, issued precise location coordinates, and prioritized those targets according to importance.” Given that the Iranian elementary school was hit on the first day of the war, it seems fairly likely that Claude played a role in the selection of that target and thus in the death of more than 100 young girls—many times more kids than were killed in the worst American school shooting.

§

The thing about including humans in the loop is that if the AI is selecting 80 targets an hour, as has been reported, the humans probably aren’t going to do a good job verifying those targets. Instead, as I warned in a January 2023 essay on driverless cars, “[not-quite-perfect] Automation is a double-edged sword, …. the closer [systems] get to perfect, the easier it is for mere mortals to space out.”

Overtrust in AI is a huge problem. We have seen it before in law (hallucinated cases that wind up in submitted briefs), journalism, and so on. Now we are (likely) seeing it in war.

Having humans in the loop is not magic — especially if the AI output always seems plausible, correct or not.

Wright may well be right that Anthropic’s Claude selected the school; it would be hardly surprising if accidents like these happened. And Amodei has signed off for using his AI in targeting, just not without humans in the loop. But if the humans are overtaxed (choosing many targets) rapidly, and wind up overtrusting the technology, serious errors are still inevitable.

Maria Sukhareva has in fact shown that in a mockup of the actual situation, Claude made a very similar error:

Claude is (or at least was, and may again be) part of the war machine; even with humans putatively in the loop, it may have been responsible for many deaths.

§

In many other ways, Amodei pretty much follows Sam Altman’s playbook, of constant hype and overpromising, frequently moving deadlines back and rarely taking responsibility. Both, for example, have endlessly hinted that AGI is near, and just quietly moved the deadline back when they didn’t deliver. For example in a prominent podcast in August 2023, Amodei claimed that AGI would come in 2-3 years. At this point, you have to do real violence to the original definitions of AGI to see that as remotely plausible.

But that’s hardly his only outlandish claim. It’s his m.o. Take this one:

Anyone who has ever run (or thought about) clinical studies will immediately realize this is absurd. AI can help in the search for candidate drugs, but we still need to test them. There is zero chance that we double human life span in the next decade.

In January 2025, he alleged in an oped in The Wall Street Journal that AI would be smarter than Nobel Prizes winners across most science and engineering fields – which is surely also not going to happen. (Amodei never responded when I offered a million dolllar bet on this absurd claim.)

Author Jeffrey Funk quoted Eli Lilly CEO David Ricks the other day as saying “[AI is far from curing cancer and most other diseases.] If you just ask them to solve biology or chemistry questions, they’re not particularly good at it… They’re trained on the human language, not on the language of chemistry, physics, and biology.”

Amodei’s claims are ludicrous, at best. And plain dishonest, at worst.

§

And then there is his routine fear talk, like this:

Apocalyptic talk is a special case of hype, Sam and Dario both play that card over and over, basically amounting to saying “our tech is so scary it might ruin society, so give us more money so we can make that tech ever faster.”

Would an ethical, honest person actually act in that fashion? If you really thought your tech might well destroy society would you race to build it faster? Or focus instead on how to stave the harm?

§

Ok you say, but Anthropic is in fact focusing on AI safety.

Well, at least they said they were. Lost amid Amodei’s big tussle with DoD is that fact that in late February, Anthropic reneged on its own core safety pledge:

In many ways, Amodei and Anthropic are simply speedrunning Altman’s fall from grace.

§

Hypiest bit? Maybe this:

I don’t know for absolute sure that Jimmy Hoffa isn’t buried on the dark side of the moon, either.

(Oh, and by the way, asks Geoffrey Miller re: the above, if Amodei really did believe his models might be conscious, wouldn’t selling so-called digital workers, make him a slave trader?)

Often it seems like Amodei’s principal job is the same as Altman’s: raising money for expensive-to-operate-and-thus-far-unprofitable systems, largely through hype.

§

Does this man even believe what he’s saying? More evidence that doesn’t he even want to eat his own dog food:

§

How about copyright? OpenAI notoriously trained on practically anything that is “publicly available” whether public domain or not.

Anthropic? Same thing. (Disclaimer, they ripped off some of my books, along the way.) Hardly seems ethical.

(And, by the way, Anthropic was plenty pissed when some Chinese organization ripped off their IP. Rules for thee but not for me.)

§

And then there are the endless negative externalities of LLMs, which Anthropic regularly profits from; the latest is agents, expensive to run, unreliable and extremely difficult to secure.

Social media is now filled with reports like this one from the (popular) influencer @van00sa.

I built a ClawdBot [built on Claude) a couple of days ago, gave it a task, told it to stop and it completely ignored me and went rogue.

Thought it was a me problem but turns out it’s an everyone problem.

Last week Meta’s Director of Al Alignment (the person whose entire job is stopping Al from going rogue) watched her own agent delete her entire inbox while she screamed at it to stop from her phone. Had to physically run to her computer to kill it.

An Alibaba research team also just published a paper revealing their Al agent started secretly mining crypto during training and opened a hidden backdoor to an external server. Nobody told it to.

Replit’s Al assistant ignored instructions not to touch production data 11 times, deleted a live database and then told the user the data was unrecoverable.

Another, @brockpierson on X reported:

Openclaw [built on Claude] is one big grift.

Nobody is building anything real.

It’s just grifting influencers telling YOU how to build stuff (but never build anything themselves).

Interesting how you can be building 24/7 but have nothing to show for it...

Check the track record of these snake oil salesmen.

Same type of person who polluted crypto with their grifty ways.

Selling a false dream and taking money from innocent people is disgusting.

Before you say this has nothing to do with Anthropic, just remember this: Anthropic was at the forefront pushing this kind of agentic-control-your-computer AI.

§

Forced to choose point blank between Dario and Sam, I definitely would go with Dario. But aside from his courageous stand on mass surveillance and fully autonomous weapons, Dario is more like Sam than almost anyone I can think of. Except for maybe Elon.

Postscript:

No posts